As pc imaginative and prescient researchers, we consider that each pixel can inform a narrative. Nonetheless, there appears to be a author’s block settling into the sphere on the subject of coping with giant photos. Massive photos are now not uncommon—the cameras we stock in our pockets and people orbiting our planet snap footage so massive and detailed that they stretch our present finest fashions and {hardware} to their breaking factors when dealing with them. Typically, we face a quadratic improve in reminiscence utilization as a operate of picture measurement.

Right this moment, we make one among two sub-optimal selections when dealing with giant photos: down-sampling or cropping. These two strategies incur vital losses within the quantity of knowledge and context current in a picture. We take one other have a look at these approaches and introduce $x$T, a brand new framework to mannequin giant photos end-to-end on up to date GPUs whereas successfully aggregating international context with native particulars.

Structure for the $x$T framework.

Why Hassle with Huge Photographs Anyway?

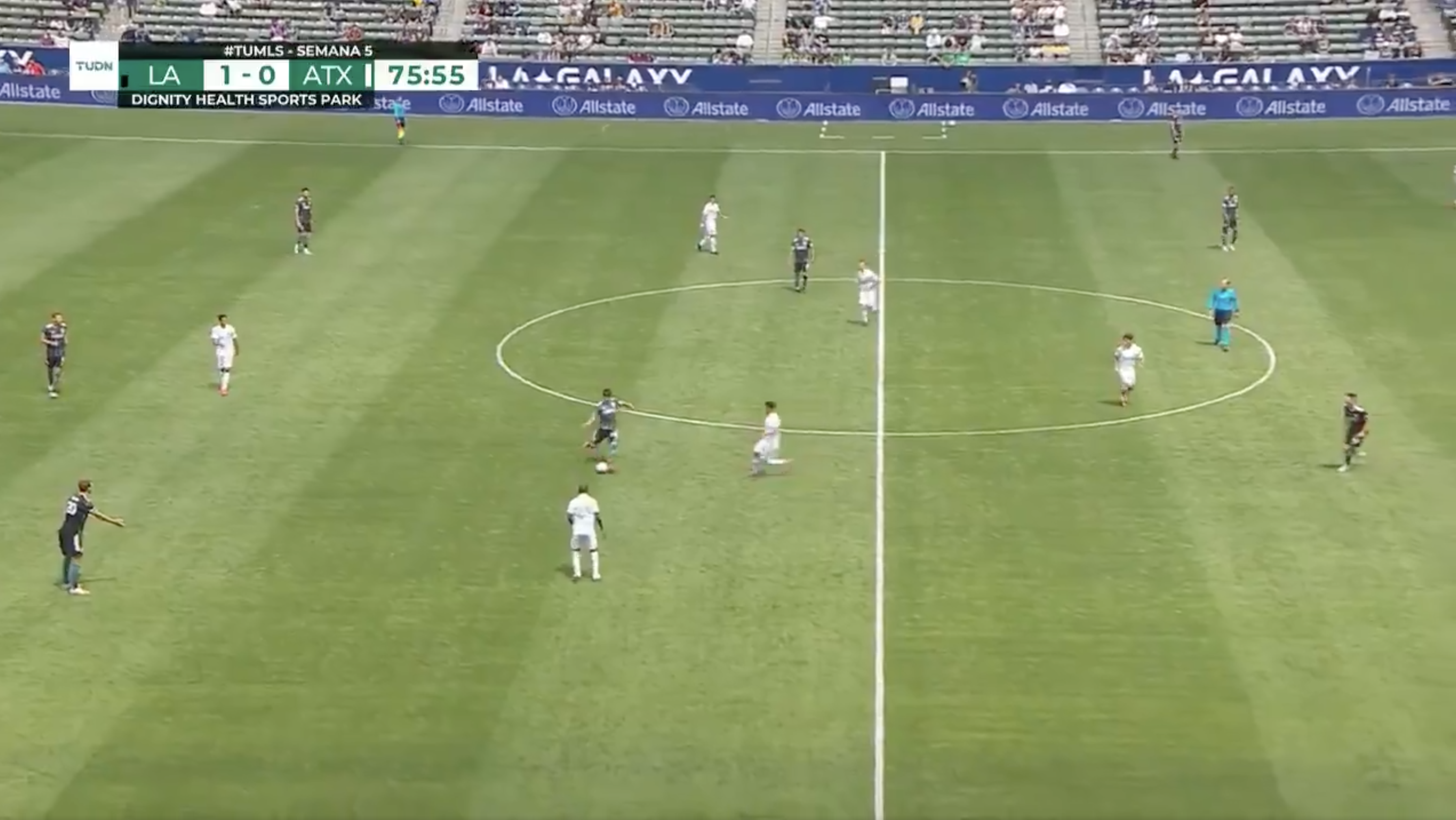

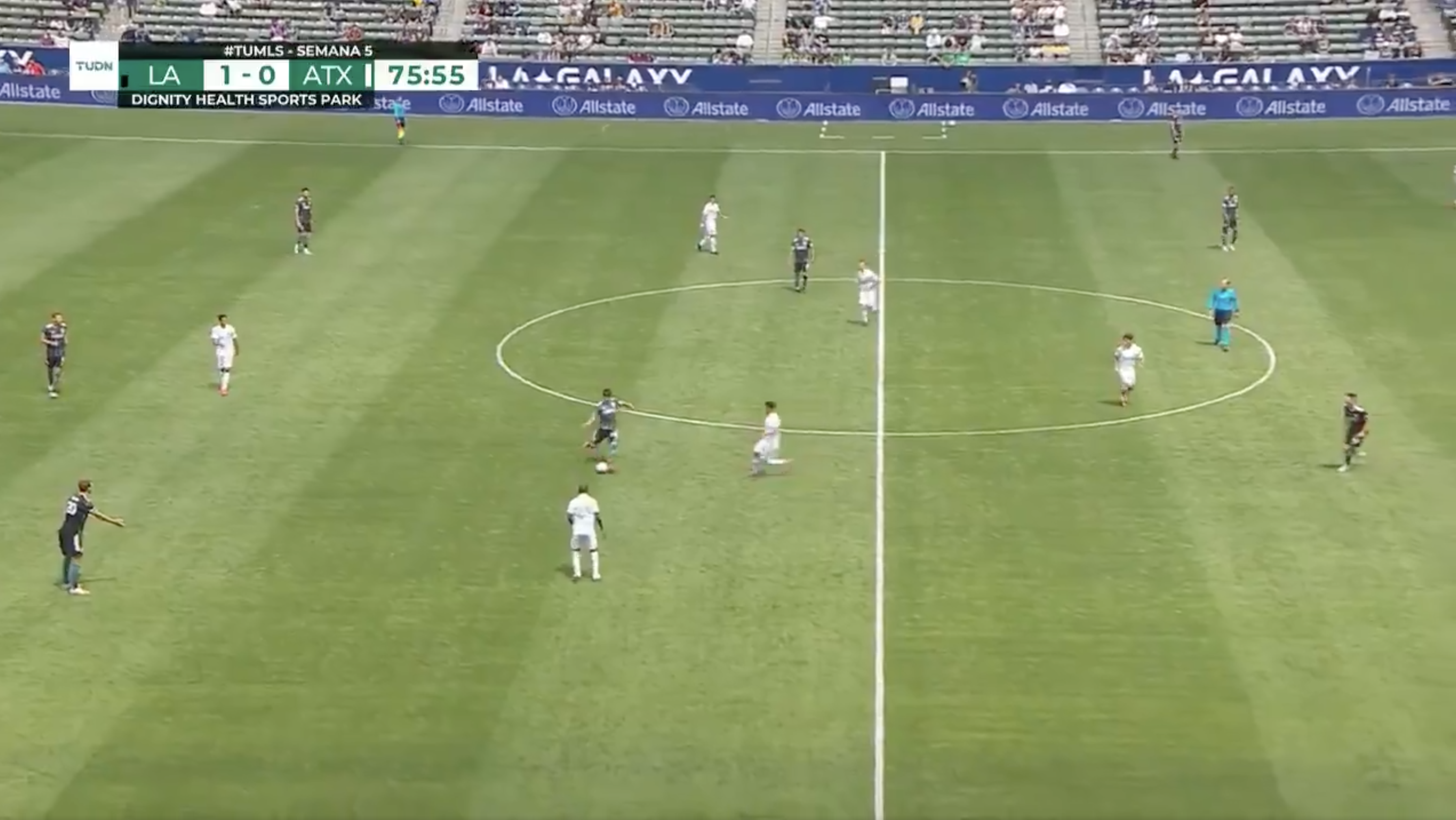

Why trouble dealing with giant photos anyhow? Image your self in entrance of your TV, watching your favourite soccer group. The sphere is dotted with gamers throughout with motion occurring solely on a small portion of the display screen at a time. Would you be satisified, nonetheless, when you might solely see a small area round the place the ball presently was? Alternatively, would you be satisified watching the sport in low decision? Each pixel tells a narrative, irrespective of how far aside they’re. That is true in all domains out of your TV display screen to a pathologist viewing a gigapixel slide to diagnose tiny patches of most cancers. These photos are treasure troves of knowledge. If we are able to’t totally discover the wealth as a result of our instruments can’t deal with the map, what’s the purpose?

Sports activities are enjoyable when you recognize what is going on on.

That’s exactly the place the frustration lies as we speak. The larger the picture, the extra we have to concurrently zoom out to see the entire image and zoom in for the nitty-gritty particulars, making it a problem to understand each the forest and the bushes concurrently. Most present strategies power a selection between dropping sight of the forest or lacking the bushes, and neither possibility is nice.

How $x$T Tries to Repair This

Think about attempting to unravel an enormous jigsaw puzzle. As an alternative of tackling the entire thing directly, which might be overwhelming, you begin with smaller sections, get a very good have a look at every bit, after which determine how they match into the larger image. That’s mainly what we do with giant photos with $x$T.

$x$T takes these gigantic photos and chops them into smaller, extra digestible items hierarchically. This isn’t nearly making issues smaller, although. It’s about understanding every bit in its personal proper after which, utilizing some intelligent methods, determining how these items join on a bigger scale. It’s like having a dialog with every a part of the picture, studying its story, after which sharing these tales with the opposite components to get the total narrative.

Nested Tokenization

On the core of $x$T lies the idea of nested tokenization. In easy phrases, tokenization within the realm of pc imaginative and prescient is akin to chopping up a picture into items (tokens) {that a} mannequin can digest and analyze. Nonetheless, $x$T takes this a step additional by introducing a hierarchy into the method—therefore, nested.

Think about you’re tasked with analyzing an in depth metropolis map. As an alternative of attempting to absorb the complete map directly, you break it down into districts, then neighborhoods inside these districts, and eventually, streets inside these neighborhoods. This hierarchical breakdown makes it simpler to handle and perceive the main points of the map whereas holding monitor of the place the whole lot matches within the bigger image. That’s the essence of nested tokenization—we break up a picture into areas, every which will be break up into additional sub-regions relying on the enter measurement anticipated by a imaginative and prescient spine (what we name a area encoder), earlier than being patchified to be processed by that area encoder. This nested strategy permits us to extract options at totally different scales on a neighborhood degree.

Coordinating Area and Context Encoders

As soon as a picture is neatly divided into tokens, $x$T employs two sorts of encoders to make sense of those items: the area encoder and the context encoder. Every performs a definite position in piecing collectively the picture’s full story.

The area encoder is a standalone “native professional” which converts impartial areas into detailed representations. Nonetheless, since every area is processed in isolation, no data is shared throughout the picture at giant. The area encoder will be any state-of-the-art imaginative and prescient spine. In our experiments we’ve utilized hierarchical imaginative and prescient transformers resembling Swin and Hiera and likewise CNNs resembling ConvNeXt!

Enter the context encoder, the big-picture guru. Its job is to take the detailed representations from the area encoders and sew them collectively, making certain that the insights from one token are thought of within the context of the others. The context encoder is mostly a long-sequence mannequin. We experiment with Transformer-XL (and our variant of it referred to as Hyper) and Mamba, although you may use Longformer and different new advances on this space. Although these long-sequence fashions are typically made for language, we reveal that it’s potential to make use of them successfully for imaginative and prescient duties.

The magic of $x$T is in how these elements—the nested tokenization, area encoders, and context encoders—come collectively. By first breaking down the picture into manageable items after which systematically analyzing these items each in isolation and in conjunction, $x$T manages to take care of the constancy of the unique picture’s particulars whereas additionally integrating long-distance context the overarching context whereas becoming large photos, end-to-end, on up to date GPUs.

Outcomes

We consider $x$T on difficult benchmark duties that span well-established pc imaginative and prescient baselines to rigorous giant picture duties. Significantly, we experiment with iNaturalist 2018 for fine-grained species classification, xView3-SAR for context-dependent segmentation, and MS-COCO for detection.

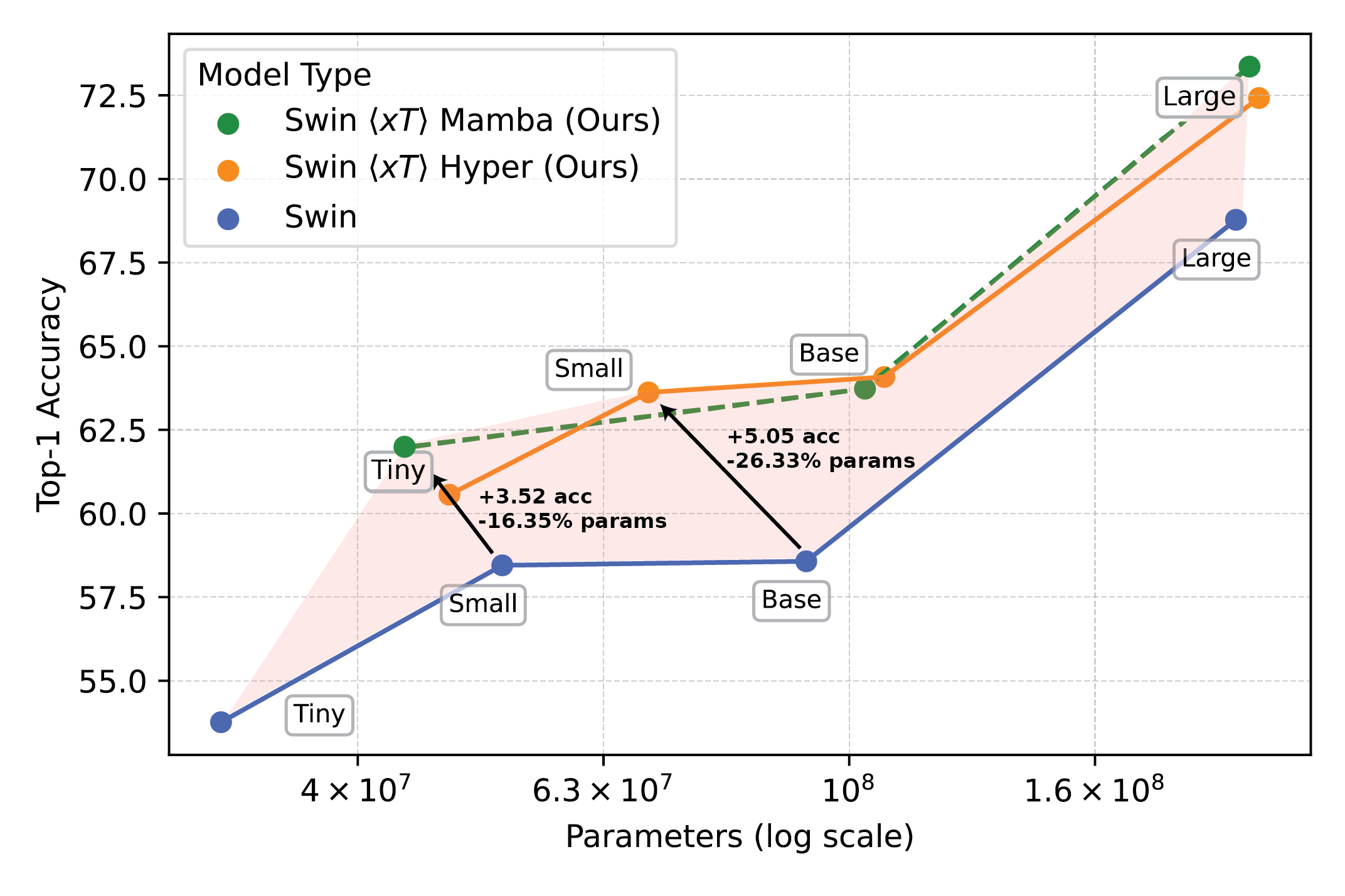

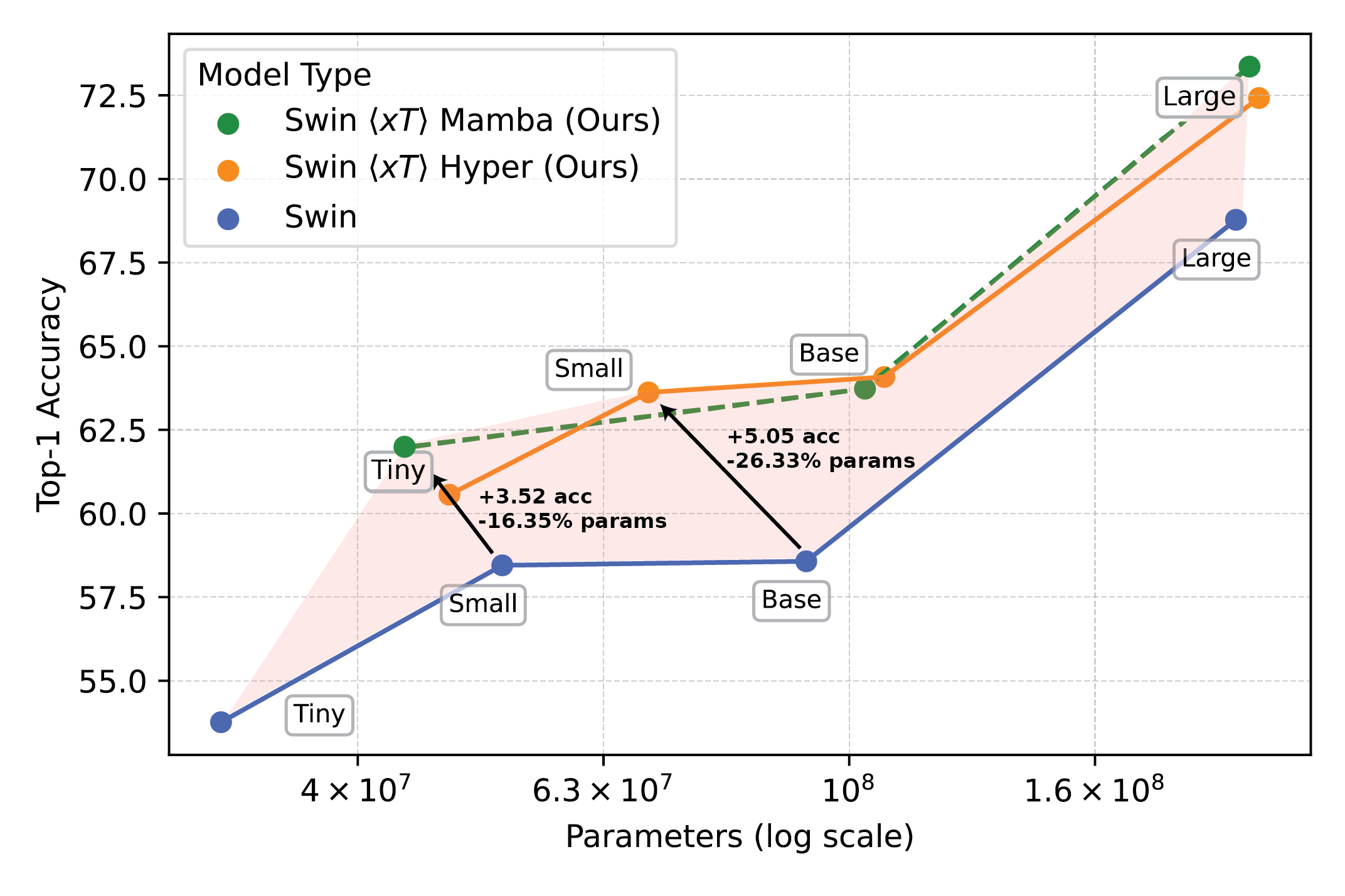

Highly effective imaginative and prescient fashions used with $x$T set a brand new frontier on downstream duties resembling fine-grained species classification.

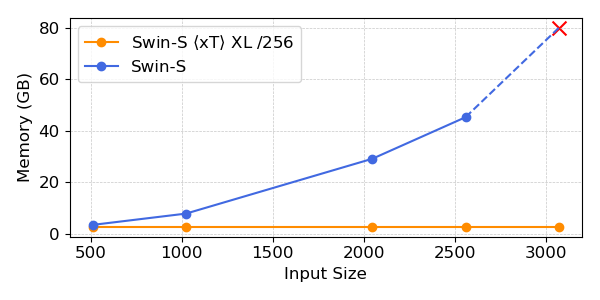

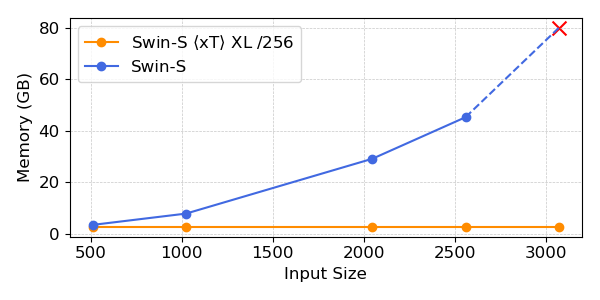

Our experiments present that $x$T can obtain increased accuracy on all downstream duties with fewer parameters whereas utilizing a lot much less reminiscence per area than state-of-the-art baselines*. We’re in a position to mannequin photos as giant as 29,000 x 25,000 pixels giant on 40GB A100s whereas comparable baselines run out of reminiscence at solely 2,800 x 2,800 pixels.

Highly effective imaginative and prescient fashions used with $x$T set a brand new frontier on downstream duties resembling fine-grained species classification.

*Relying in your selection of context mannequin, resembling Transformer-XL.

Why This Issues Extra Than You Assume

This strategy isn’t simply cool; it’s obligatory. For scientists monitoring local weather change or docs diagnosing ailments, it’s a game-changer. It means creating fashions which perceive the total story, not simply bits and items. In environmental monitoring, for instance, with the ability to see each the broader adjustments over huge landscapes and the main points of particular areas can assist in understanding the larger image of local weather influence. In healthcare, it might imply the distinction between catching a illness early or not.

We’re not claiming to have solved all of the world’s issues in a single go. We hope that with $x$T we’ve opened the door to what’s potential. We’re moving into a brand new period the place we don’t should compromise on the readability or breadth of our imaginative and prescient. $x$T is our massive leap in direction of fashions that may juggle the intricacies of large-scale photos with out breaking a sweat.

There’s much more floor to cowl. Analysis will evolve, and hopefully, so will our means to course of even greater and extra advanced photos. Actually, we’re engaged on follow-ons to $x$T which can increase this frontier additional.

In Conclusion

For an entire therapy of this work, please try the paper on arXiv. The challenge web page comprises a hyperlink to our launched code and weights. When you discover the work helpful, please cite it as beneath:

@article{xTLargeImageModeling,

title={xT: Nested Tokenization for Bigger Context in Massive Photographs},

creator={Gupta, Ritwik and Li, Shufan and Zhu, Tyler and Malik, Jitendra and Darrell, Trevor and Mangalam, Karttikeya},

journal={arXiv preprint arXiv:2403.01915},

yr={2024}

}

As pc imaginative and prescient researchers, we consider that each pixel can inform a narrative. Nonetheless, there appears to be a author’s block settling into the sphere on the subject of coping with giant photos. Massive photos are now not uncommon—the cameras we stock in our pockets and people orbiting our planet snap footage so massive and detailed that they stretch our present finest fashions and {hardware} to their breaking factors when dealing with them. Typically, we face a quadratic improve in reminiscence utilization as a operate of picture measurement.

Right this moment, we make one among two sub-optimal selections when dealing with giant photos: down-sampling or cropping. These two strategies incur vital losses within the quantity of knowledge and context current in a picture. We take one other have a look at these approaches and introduce $x$T, a brand new framework to mannequin giant photos end-to-end on up to date GPUs whereas successfully aggregating international context with native particulars.

Structure for the $x$T framework.

Why Hassle with Huge Photographs Anyway?

Why trouble dealing with giant photos anyhow? Image your self in entrance of your TV, watching your favourite soccer group. The sphere is dotted with gamers throughout with motion occurring solely on a small portion of the display screen at a time. Would you be satisified, nonetheless, when you might solely see a small area round the place the ball presently was? Alternatively, would you be satisified watching the sport in low decision? Each pixel tells a narrative, irrespective of how far aside they’re. That is true in all domains out of your TV display screen to a pathologist viewing a gigapixel slide to diagnose tiny patches of most cancers. These photos are treasure troves of knowledge. If we are able to’t totally discover the wealth as a result of our instruments can’t deal with the map, what’s the purpose?

Sports activities are enjoyable when you recognize what is going on on.

That’s exactly the place the frustration lies as we speak. The larger the picture, the extra we have to concurrently zoom out to see the entire image and zoom in for the nitty-gritty particulars, making it a problem to understand each the forest and the bushes concurrently. Most present strategies power a selection between dropping sight of the forest or lacking the bushes, and neither possibility is nice.

How $x$T Tries to Repair This

Think about attempting to unravel an enormous jigsaw puzzle. As an alternative of tackling the entire thing directly, which might be overwhelming, you begin with smaller sections, get a very good have a look at every bit, after which determine how they match into the larger image. That’s mainly what we do with giant photos with $x$T.

$x$T takes these gigantic photos and chops them into smaller, extra digestible items hierarchically. This isn’t nearly making issues smaller, although. It’s about understanding every bit in its personal proper after which, utilizing some intelligent methods, determining how these items join on a bigger scale. It’s like having a dialog with every a part of the picture, studying its story, after which sharing these tales with the opposite components to get the total narrative.

Nested Tokenization

On the core of $x$T lies the idea of nested tokenization. In easy phrases, tokenization within the realm of pc imaginative and prescient is akin to chopping up a picture into items (tokens) {that a} mannequin can digest and analyze. Nonetheless, $x$T takes this a step additional by introducing a hierarchy into the method—therefore, nested.

Think about you’re tasked with analyzing an in depth metropolis map. As an alternative of attempting to absorb the complete map directly, you break it down into districts, then neighborhoods inside these districts, and eventually, streets inside these neighborhoods. This hierarchical breakdown makes it simpler to handle and perceive the main points of the map whereas holding monitor of the place the whole lot matches within the bigger image. That’s the essence of nested tokenization—we break up a picture into areas, every which will be break up into additional sub-regions relying on the enter measurement anticipated by a imaginative and prescient spine (what we name a area encoder), earlier than being patchified to be processed by that area encoder. This nested strategy permits us to extract options at totally different scales on a neighborhood degree.

Coordinating Area and Context Encoders

As soon as a picture is neatly divided into tokens, $x$T employs two sorts of encoders to make sense of those items: the area encoder and the context encoder. Every performs a definite position in piecing collectively the picture’s full story.

The area encoder is a standalone “native professional” which converts impartial areas into detailed representations. Nonetheless, since every area is processed in isolation, no data is shared throughout the picture at giant. The area encoder will be any state-of-the-art imaginative and prescient spine. In our experiments we’ve utilized hierarchical imaginative and prescient transformers resembling Swin and Hiera and likewise CNNs resembling ConvNeXt!

Enter the context encoder, the big-picture guru. Its job is to take the detailed representations from the area encoders and sew them collectively, making certain that the insights from one token are thought of within the context of the others. The context encoder is mostly a long-sequence mannequin. We experiment with Transformer-XL (and our variant of it referred to as Hyper) and Mamba, although you may use Longformer and different new advances on this space. Although these long-sequence fashions are typically made for language, we reveal that it’s potential to make use of them successfully for imaginative and prescient duties.

The magic of $x$T is in how these elements—the nested tokenization, area encoders, and context encoders—come collectively. By first breaking down the picture into manageable items after which systematically analyzing these items each in isolation and in conjunction, $x$T manages to take care of the constancy of the unique picture’s particulars whereas additionally integrating long-distance context the overarching context whereas becoming large photos, end-to-end, on up to date GPUs.

Outcomes

We consider $x$T on difficult benchmark duties that span well-established pc imaginative and prescient baselines to rigorous giant picture duties. Significantly, we experiment with iNaturalist 2018 for fine-grained species classification, xView3-SAR for context-dependent segmentation, and MS-COCO for detection.

Highly effective imaginative and prescient fashions used with $x$T set a brand new frontier on downstream duties resembling fine-grained species classification.

Our experiments present that $x$T can obtain increased accuracy on all downstream duties with fewer parameters whereas utilizing a lot much less reminiscence per area than state-of-the-art baselines*. We’re in a position to mannequin photos as giant as 29,000 x 25,000 pixels giant on 40GB A100s whereas comparable baselines run out of reminiscence at solely 2,800 x 2,800 pixels.

Highly effective imaginative and prescient fashions used with $x$T set a brand new frontier on downstream duties resembling fine-grained species classification.

*Relying in your selection of context mannequin, resembling Transformer-XL.

Why This Issues Extra Than You Assume

This strategy isn’t simply cool; it’s obligatory. For scientists monitoring local weather change or docs diagnosing ailments, it’s a game-changer. It means creating fashions which perceive the total story, not simply bits and items. In environmental monitoring, for instance, with the ability to see each the broader adjustments over huge landscapes and the main points of particular areas can assist in understanding the larger image of local weather influence. In healthcare, it might imply the distinction between catching a illness early or not.

We’re not claiming to have solved all of the world’s issues in a single go. We hope that with $x$T we’ve opened the door to what’s potential. We’re moving into a brand new period the place we don’t should compromise on the readability or breadth of our imaginative and prescient. $x$T is our massive leap in direction of fashions that may juggle the intricacies of large-scale photos with out breaking a sweat.

There’s much more floor to cowl. Analysis will evolve, and hopefully, so will our means to course of even greater and extra advanced photos. Actually, we’re engaged on follow-ons to $x$T which can increase this frontier additional.

In Conclusion

For an entire therapy of this work, please try the paper on arXiv. The challenge web page comprises a hyperlink to our launched code and weights. When you discover the work helpful, please cite it as beneath:

@article{xTLargeImageModeling,

title={xT: Nested Tokenization for Bigger Context in Massive Photographs},

creator={Gupta, Ritwik and Li, Shufan and Zhu, Tyler and Malik, Jitendra and Darrell, Trevor and Mangalam, Karttikeya},

journal={arXiv preprint arXiv:2403.01915},

yr={2024}

}